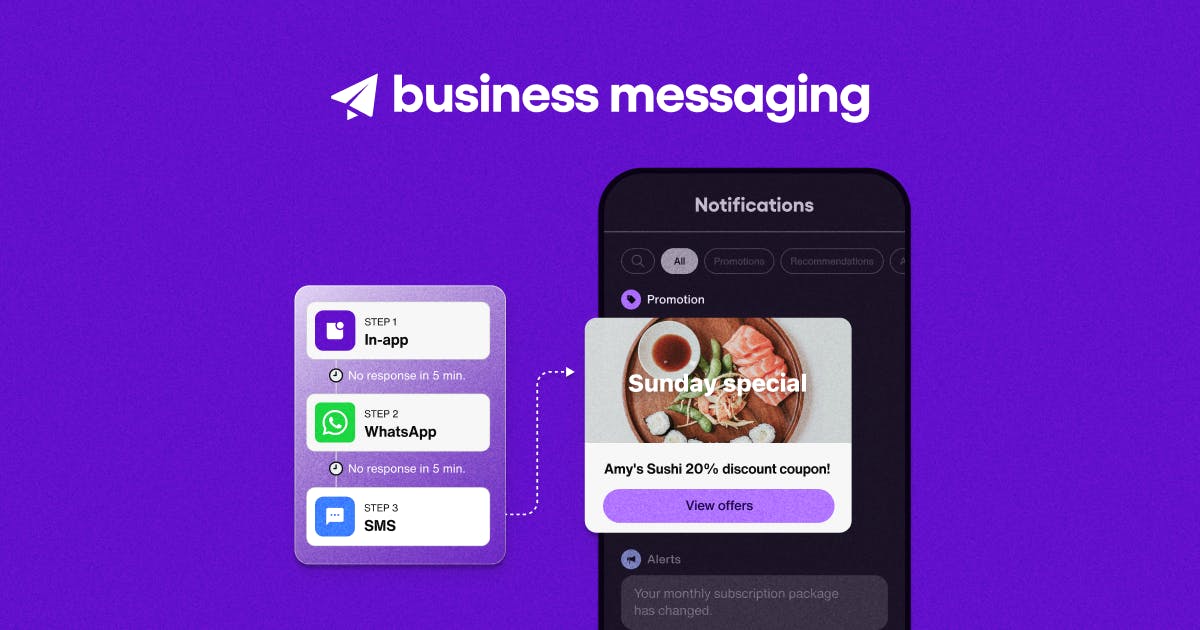

Discover how Sendbird Business Messaging blends high-performing and cost-effective communication channels in one omnichannel messaging platform. [...]

Most read

18 mobile push notification examples to inspire you

Push notifications are a key tool for business growth when implemented as part o [...]

12 benefits of fintech AI chatbots: Don’t get left behind in the revolution of AI in finance

AI in finance is reshaping the future of fintech. Here’s how to leverage fintech [...]